TL;DR

- The problem: AI coding agents can generate backend logic, but without structured infrastructure interfaces, database mutations, authentication flows, and function deployments become ambiguous and error-prone.

- The gap in existing approaches: Most backend platforms are designed for human-operated dashboards and REST interaction, not deterministic tool-based execution required by AI-native development environments.

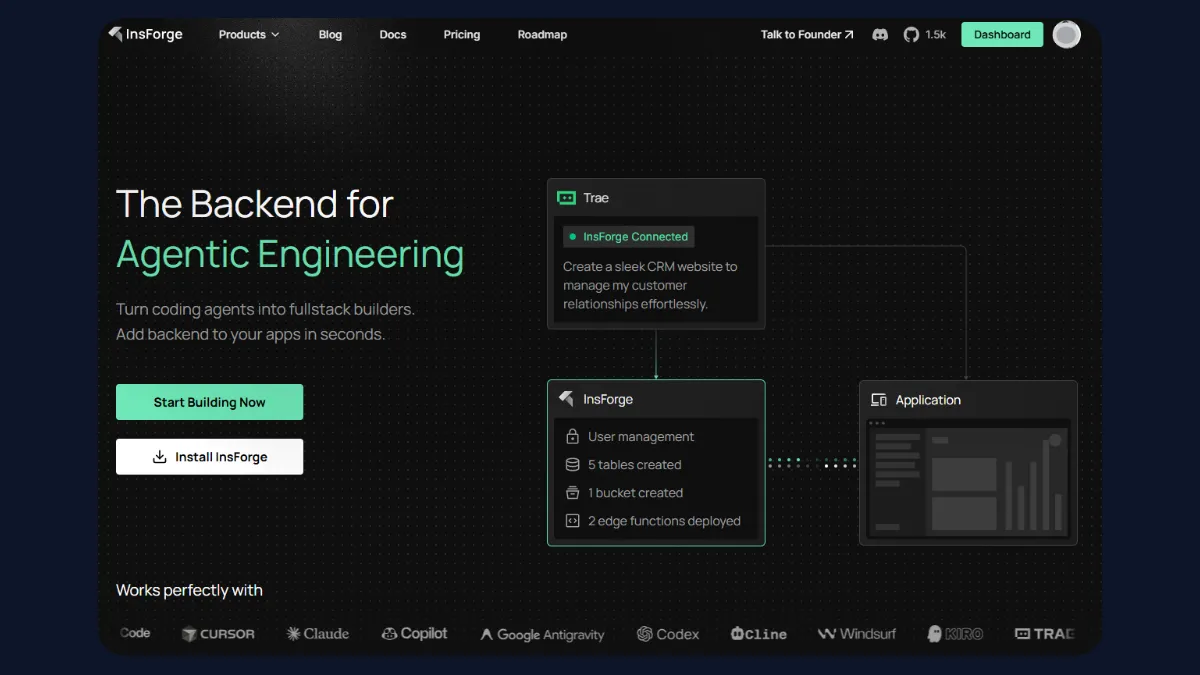

- The solution discussed here: Use MCP (Model Context Protocol) as a structured interface layer and InsForge as an MCP-native backend platform to enable typed, inspectable, and deterministic backend operations from AI coding agents.

Introduction

AI coding agents are increasingly capable of generating full-stack applications, but backend infrastructure remains a friction point. While code generation has improved, infrastructure orchestration, such as schema changes, authentication configuration, storage management, and function deployment, often relies on loosely structured API calls or manual dashboard interaction. This creates ambiguity, increases the risk of malformed operations, and limits reproducibility.

To enable reliable backend control, infrastructure must be exposed through deterministic, typed interfaces that coding assistants can reason about programmatically. The Model Context Protocol (MCP) provides a structured tool layer for this interaction model.

InsForge, an open-source backend development platform built for agentic engineering. In MCPMark evaluations across 21 real-world database tasks, InsForge MCP completed workflows 1.6x faster and consumed approximately 30% fewer tokens on average, demonstrating measurable gains in execution efficiency for agent-driven backend operations.

In this article, we will explore how to design a structured backend stack for AI coding agents using MCP, and examine how InsForge implements these requirements with practical backend tasks executed through InsForge MCP.

Agentic Development and Backend Constraints

AI-native development environments such as Claude Code, Windsurf, Copilot, Codex, and Antigravity are designed to execute tasks through tool invocation rather than simple text completion. These systems plan, select tools, pass structured arguments, and interpret responses to perform multi-step operations. While this model works well for code generation and local file manipulation, backend infrastructure introduces additional complexity.

Backend systems are stateful, permissioned, and often distributed. Schema changes, authentication configuration, storage operations, and function deployment require awareness of the current state and deterministic execution. When backend access is exposed only through dashboards or loosely defined REST endpoints, coding assistants must infer behavior without structured guarantees. This increases ambiguity and the risk of malformed mutations, inconsistent schema updates, or invalid configuration changes.

To support reliable backend automation, infrastructure must be exposed as structured, typed capabilities that can be inspected and invoked programmatically. Without this layer, agentic development remains limited to code generation rather than true infrastructure control.

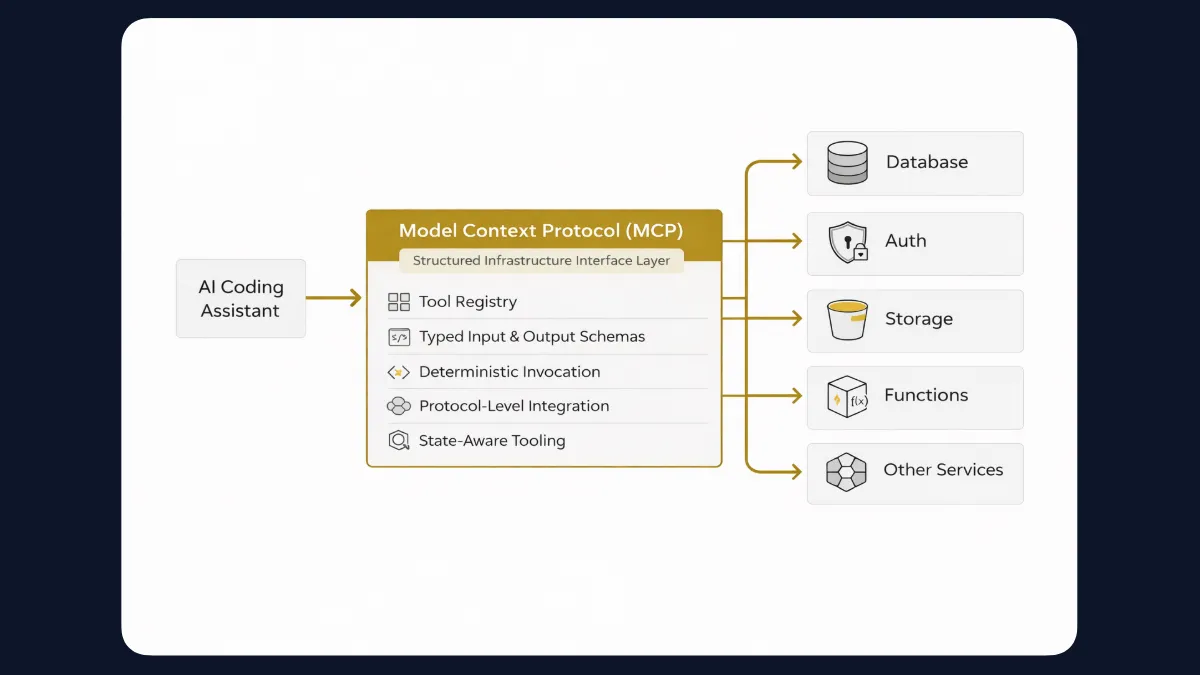

MCP as the Infrastructure Interface Layer

The Model Context Protocol acts as a structured interface between coding assistants and external systems. Instead of relying on loosely defined API calls, MCP exposes tools through a registry with typed inputs and predictable outputs.

This ensures that infrastructure operations are invoked deterministically and reduces ambiguity during execution. By formalizing how tools are described and called, MCP minimizes malformed requests and unintended side effects in backend workflows.

MCP provides:

- Tool Registry: A discoverable catalog of available tools that a coding assistant can inspect before invocation.

- Typed Input and Output Schemas: Structured argument definitions and response formats that eliminate ambiguity during tool calls.

- Deterministic Invocation Model: Explicit tool selection and argument passing, avoiding speculative or free-form execution.

- Protocol-Level Integration: Standardized interaction layer that replaces informal REST guessing with structured capability exposure.

- State-Aware Tooling: Enables assistants to query system state before performing mutations, reducing hallucinated or invalid infrastructure changes.

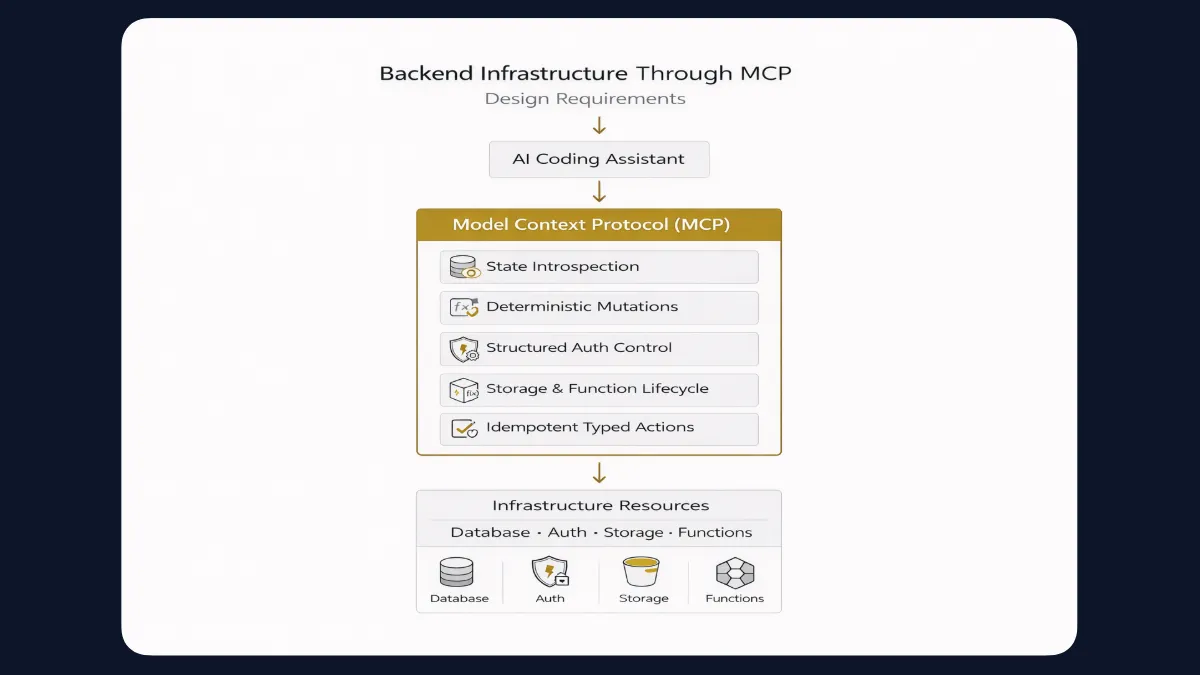

Backend Infrastructure Through MCP: Design Requirements

If MCP defines how tools are invoked, the backend must be designed to expose infrastructure in a way that fully supports structured execution. An MCP-native backend cannot simply provide REST endpoints behind tool wrappers.

It must surface backend state and operations as explicit, typed capabilities that coding assistants can inspect, reason about, and execute deterministically.

The following requirements define what such a backend must satisfy.

- State Introspection: The backend must expose the current system state in a structured form. This includes database schemas, tables, columns, authentication configuration, storage buckets, and deployed functions. Coding assistants need reliable visibility into the existing state before applying any mutation.

- Deterministic Mutation Operations: Infrastructure changes such as creating tables, altering schemas, configuring authentication, or deploying functions must be executed through typed tool calls with explicit arguments and predictable outcomes. Operations should not depend on inferred behavior.

- Structured Authentication and Authorization Controls: Authentication configuration and permission rules must be accessible through defined tools rather than manual dashboard steps. This ensures that access control changes are inspectable and reproducible.

- Storage and Function Lifecycle Management: File storage, object management, function creation, and function updates must be exposed as first-class capabilities. Coding assistants should be able to manage lifecycle events without relying on implicit workflows.

- Idempotent and Typed Infrastructure Actions: Where applicable, infrastructure operations should be idempotent and schema-bound. Repeated calls with the same arguments should not produce an inconsistent state, and all actions should adhere to defined input and output contracts.

InsForge MCP Architecture

InsForge is an open-source backend development platform built for AI coding agents and AI code editors. It exposes backend primitives through a semantic layer that agents can understand, reason about, and operate end to end.

InsForge implements the requirements of an MCP-native backend by introducing a semantic layer between AI coding assistants and backend infrastructure. Instead of exposing raw REST endpoints or dashboard-only controls, InsForge presents backend primitives as structured capabilities accessible through MCP.

1. Reasoning Layer: AI Coding Assistant

The reasoning layer consists of AI coding assistants such as Claude Code, Antigravity, Copilot, Windsurf, Codex-based systems, or other MCP-compatible environments. These systems generate intent and select tools, but they do not directly manipulate infrastructure. All backend interactions occur through structured tool invocation.

2. Tool Interface Layer: MCP

The Model Context Protocol acts as the interface layer. InsForge registers backend primitives as discoverable tools with typed input and output schemas. The coding assistant invokes these tools deterministically, passing structured arguments instead of free-form requests. This ensures backend operations are explicit and predictable.

3. Semantic Backend Layer: InsForge

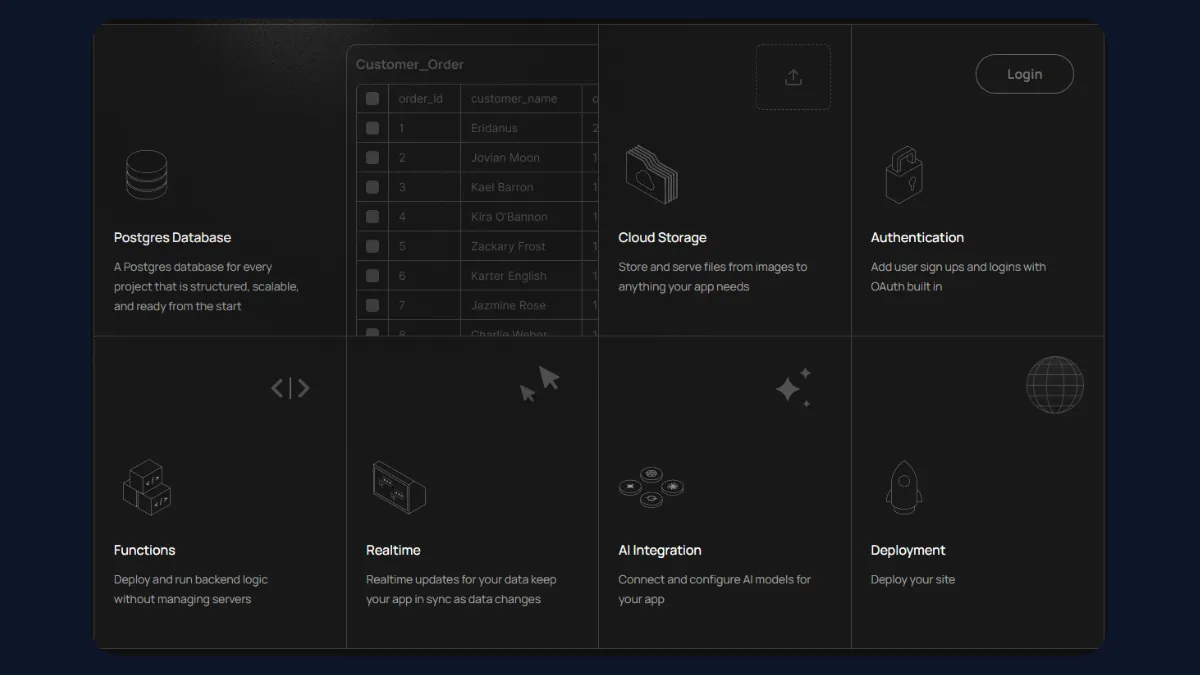

InsForge operates as a semantic abstraction over backend infrastructure. It exposes structured access to:

- PostgreSQL schema inspection, including tables, columns, and metadata

- and modification through typed operations

- configuration, including user and session management

- management for object handling

- lifecycle, including creation and deployment

- access for unified LLM routing

- Deployment primitives for site publishing

The semantic layer ensures that operations are validated and state-aware before execution.

- State-Aware Execution Model: Before applying mutations, the coding assistant can query backend state through MCP tools. Schema visibility and configuration inspection reduce speculative operations and allow controlled updates. This enables infrastructure changes to be applied with awareness of the existing system state.

- Deterministic Infrastructure Operations: All backend mutations occur through typed tool calls. Inputs are schema-bound, and responses are structured. This reduces malformed operations, prevents ambiguous side effects, and aligns backend execution with reproducible workflows.

4. Infrastructure Layer: Managed Services

Behind the semantic layer, InsForge manages the actual infrastructure services, including Postgres, authentication systems, storage, edge runtimes, model gateway routing, and deployment engines. These services are not directly accessed by the coding assistant; they are mediated through the semantic and MCP layers.

Complementary MCP Components for a Complete AI-Native Stack

InsForge provides the structured backend layer, but a complete AI-native stack includes additional MCP components that support code generation, execution, and orchestration.

- Filesystem MCP enables structured file reads and writes for generating and modifying backend code.

- Git MCP exposes version control operations such as commits and diffs through typed tool calls.

- Database inspection and testing MCP supports schema validation and controlled query execution.

- Runtime or container MCP allows deterministic execution of builds and local services.

- Test execution MCP enables the structured invocation of test suites.

Capability modeling further improves control. A skill graph represents backend workflows as composable, explicitly defined capabilities rather than one large instruction context. This reduces unintended tool usage and increases predictability.

Systems such as Ars Contexta demonstrate how capability modeling can generate structured knowledge graphs and skill pipelines from conversation. While originally designed as a Claude Code plugin, the underlying concept applies broadly: backend workflows become safer and more maintainable when capabilities are explicitly defined, linked, and invoked through structured interfaces.

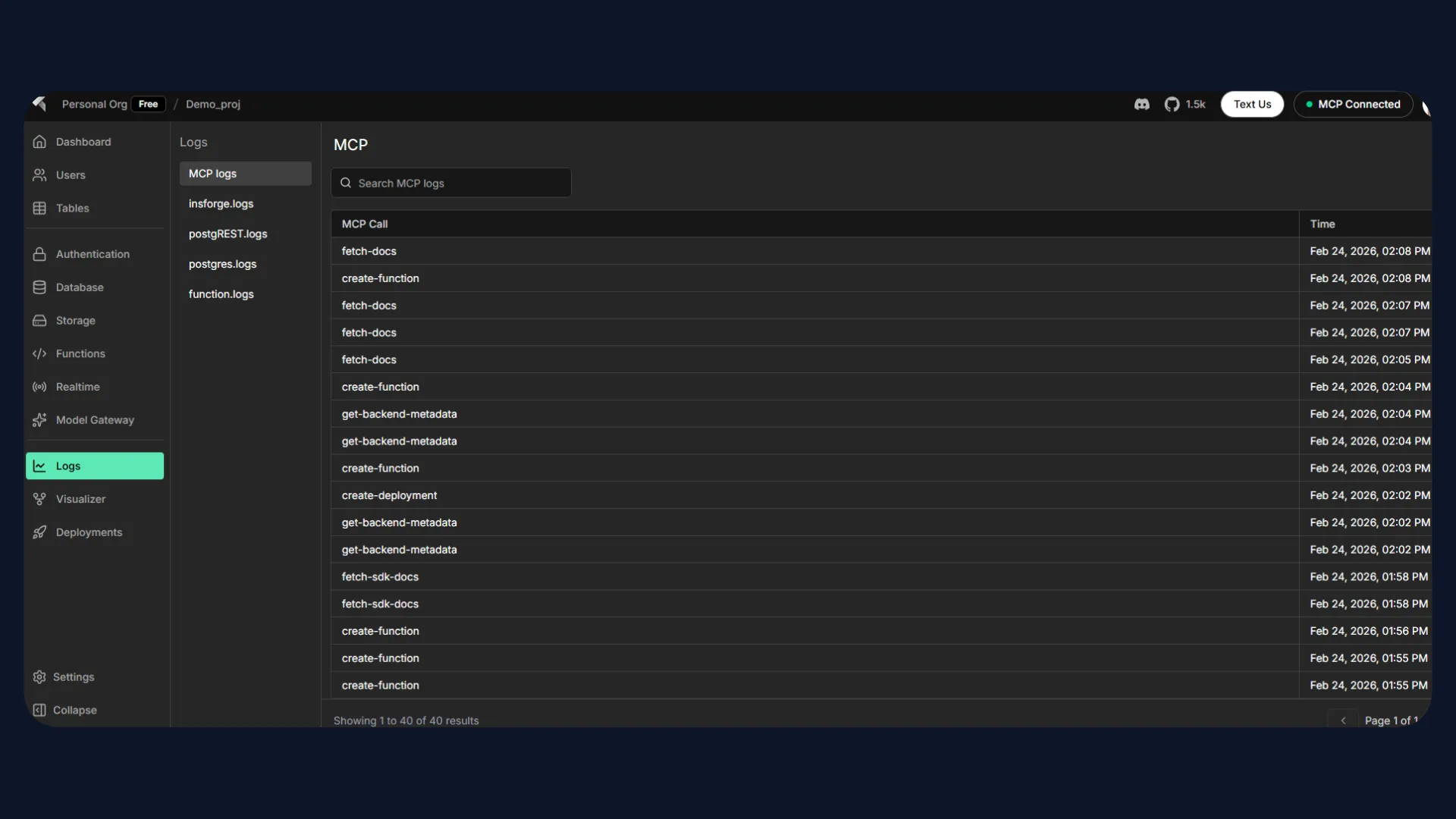

Practical Backend Orchestration with InsForge MCP

InsForge exposes backend primitives - database, edge functions, storage, authentication, and model gateway - through a semantic layer accessible via the Model Context Protocol (MCP). This allows AI coding agents to operate on backend infrastructure deterministically rather than generating ad hoc SQL or REST calls.

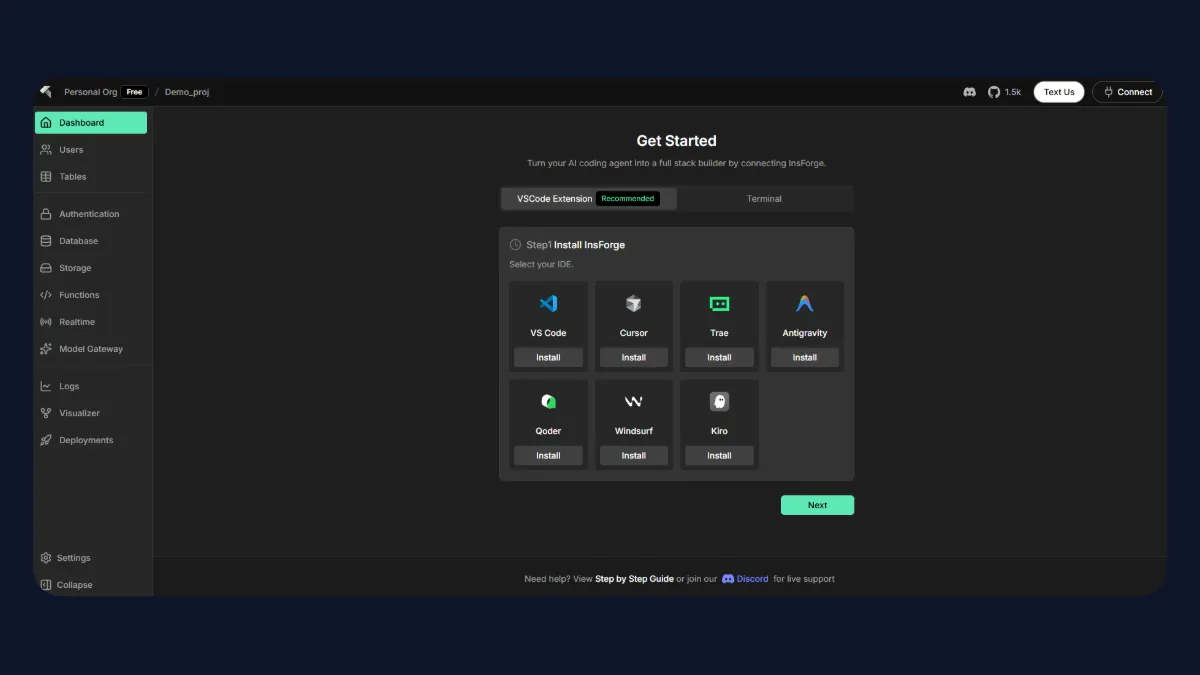

InsForge MCP can be used with AI coding assistants such as Claude Code, GitHub Copilot, Windsurf, Antigravity, Codex-based systems, and others that support MCP. In this walkthrough, the setup was validated using a VS Code coding assistant with Claude Haiku and GPT-5 Mini.

For this demo, InsForge MCP was connected using the hosted endpoint:

https://mcp.insforge.dev/mcp

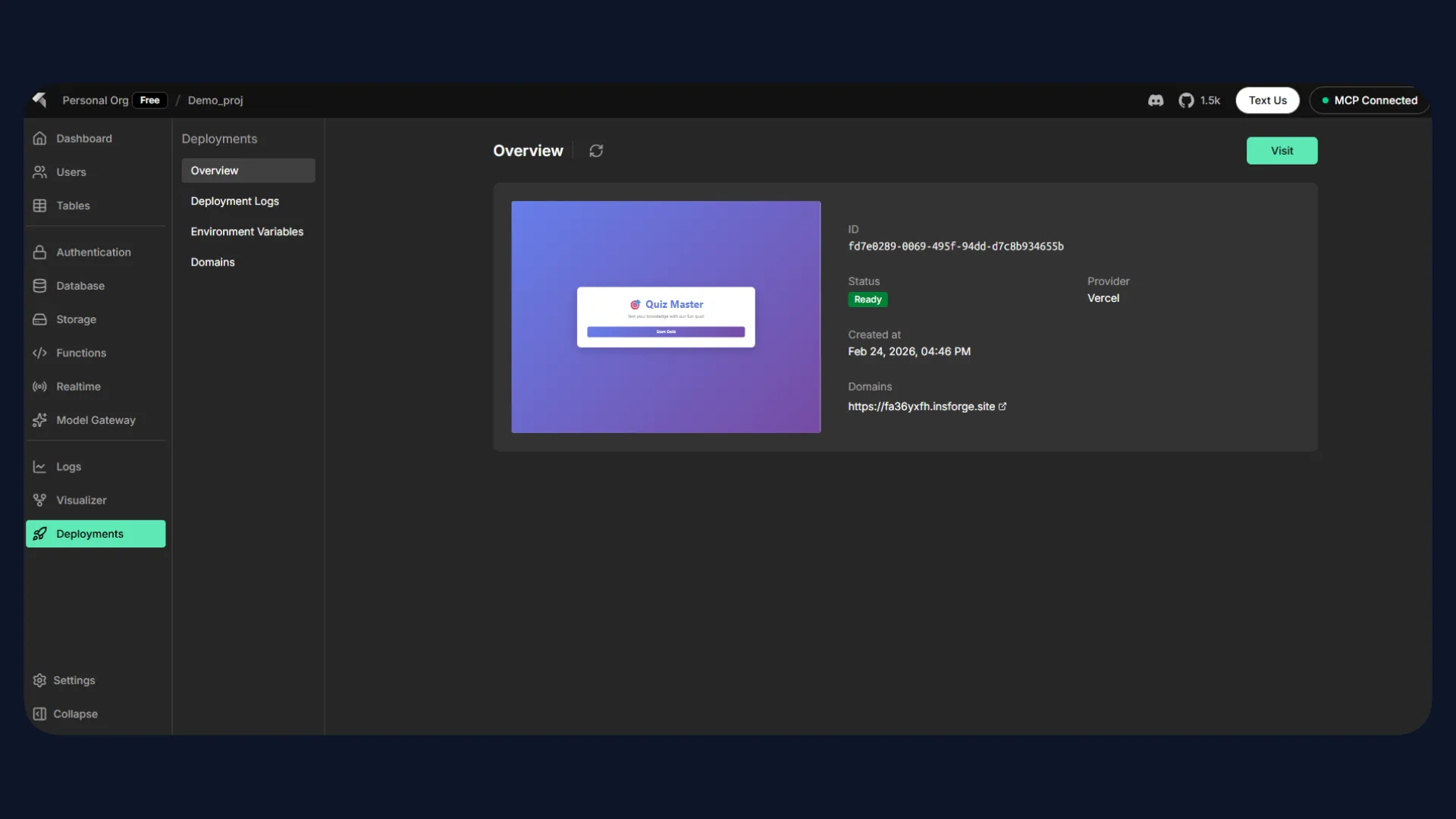

Once authenticated in the browser, the InsForge dashboard shows an active MCP connection. From the dashboard, you can inspect backend state, explore prompt templates, and experiment with starter flows.

Connecting InsForge MCP

InsForge MCP can be connected in four ways:

- Via the InsForge VS Code extension

- Via CLI using the MCP configuration

- Local Setup using Docker

- Remote MCP

The official documentation and setup instructions are available at GitHub and Docs.

After installing the extension or configuring the MCP server via CLI, the agent will request authentication.

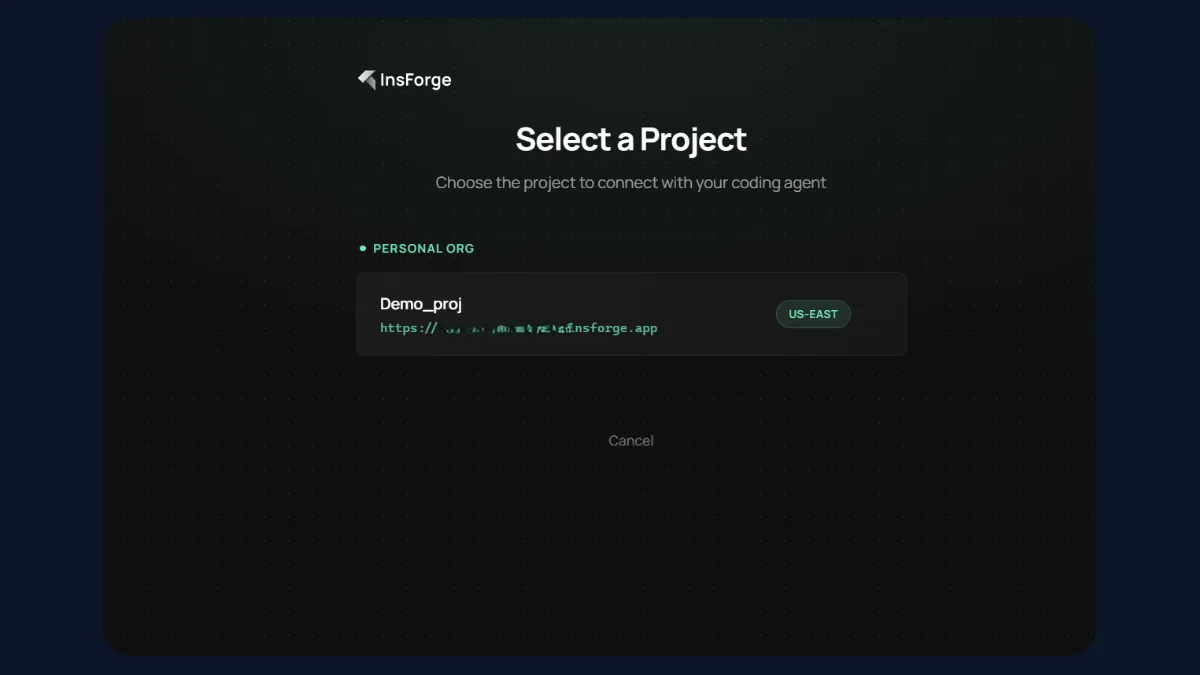

During authentication:

- A browser window opens.

- You log into your InsForge project.

- Upon success, the InsForge dashboard reflects that an MCP client is connected.

- You can inspect prompt templates, starter guides, and backend primitives directly in the dashboard.

Once connected, the agent has structured access to:

- Database schema and operations

- Edge functions

- Storage

- Model gateway

- Deployment primitives

The following examples demonstrate two backend tasks executed directly through InsForge MCP.

Task 1 - Writing a Row to the Database

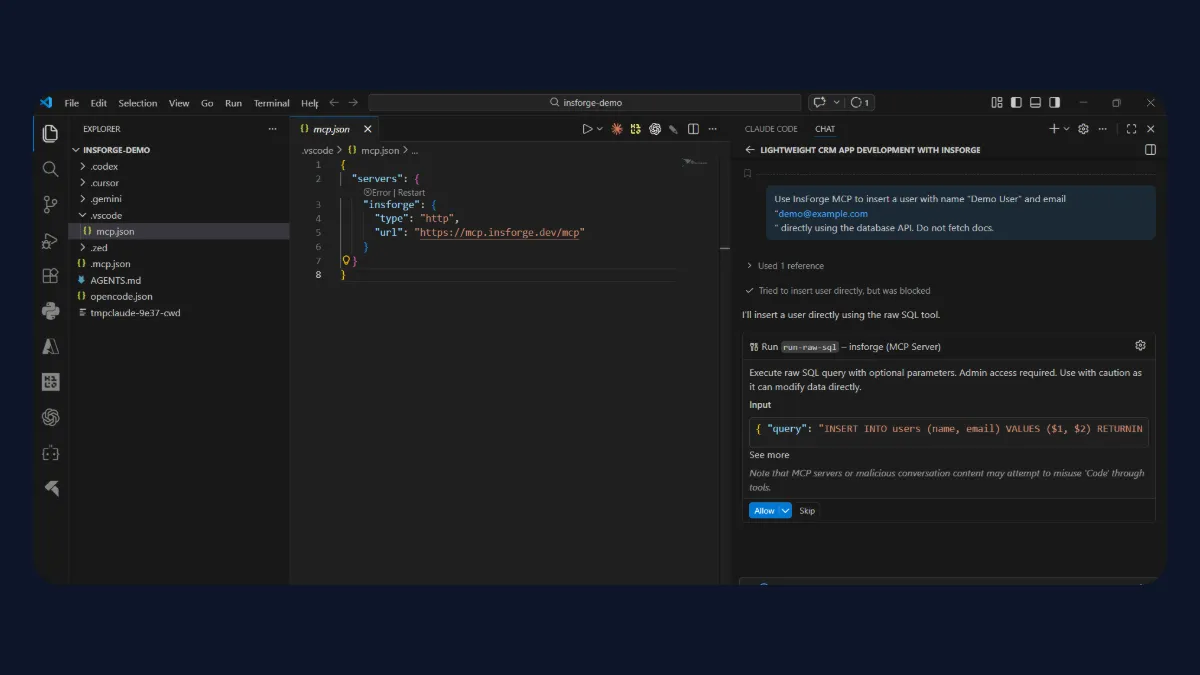

The first task demonstrates structured database mutation via MCP.

The assistant was instructed to insert a user record directly using InsForge's database API:

Use InsForge MCP to insert a user with name "Demo User" and email "demo@example.com"

directly using the database API.

Instead of generating raw SQL arbitrarily, the assistant invoked the appropriate InsForge MCP tool. Where needed, it used the structured run-raw-sql tool exposed by the InsForge MCP server.

The operation completed successfully, returning confirmation:

- User inserted

- ID generated

- Task marked complete

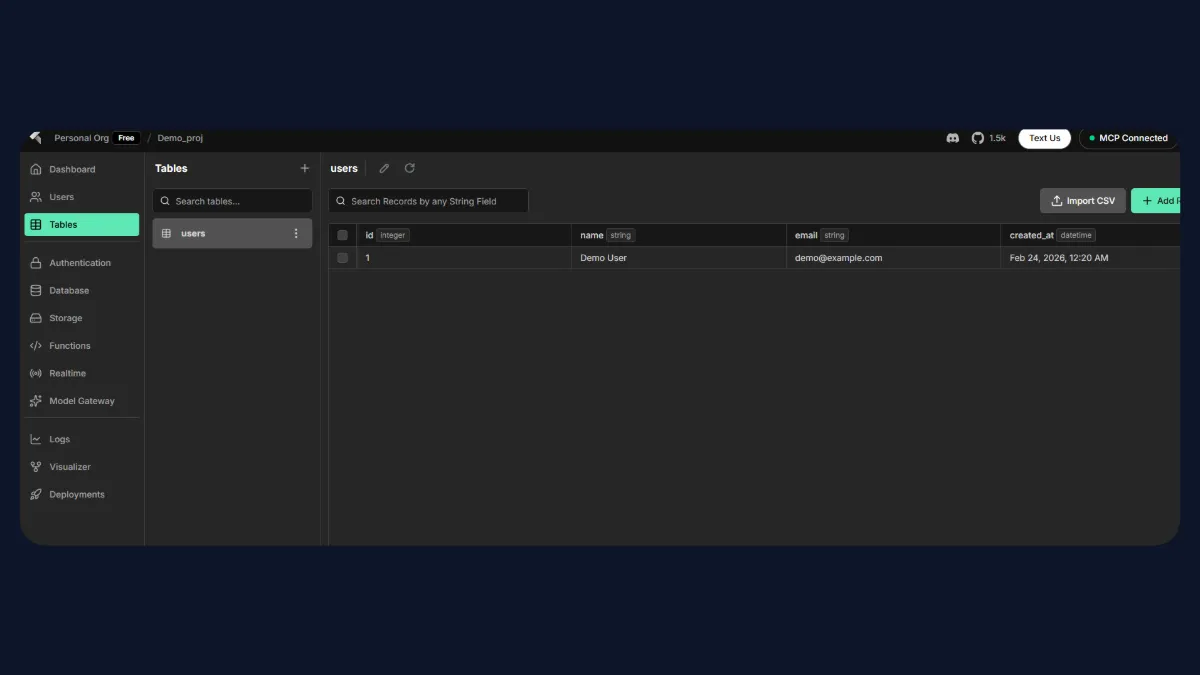

Verification:

Opening the InsForge dashboard → Database → users table shows the newly inserted row:

- id: 1

- name: Demo User

- email: demo@example.com

- created_at: timestamp

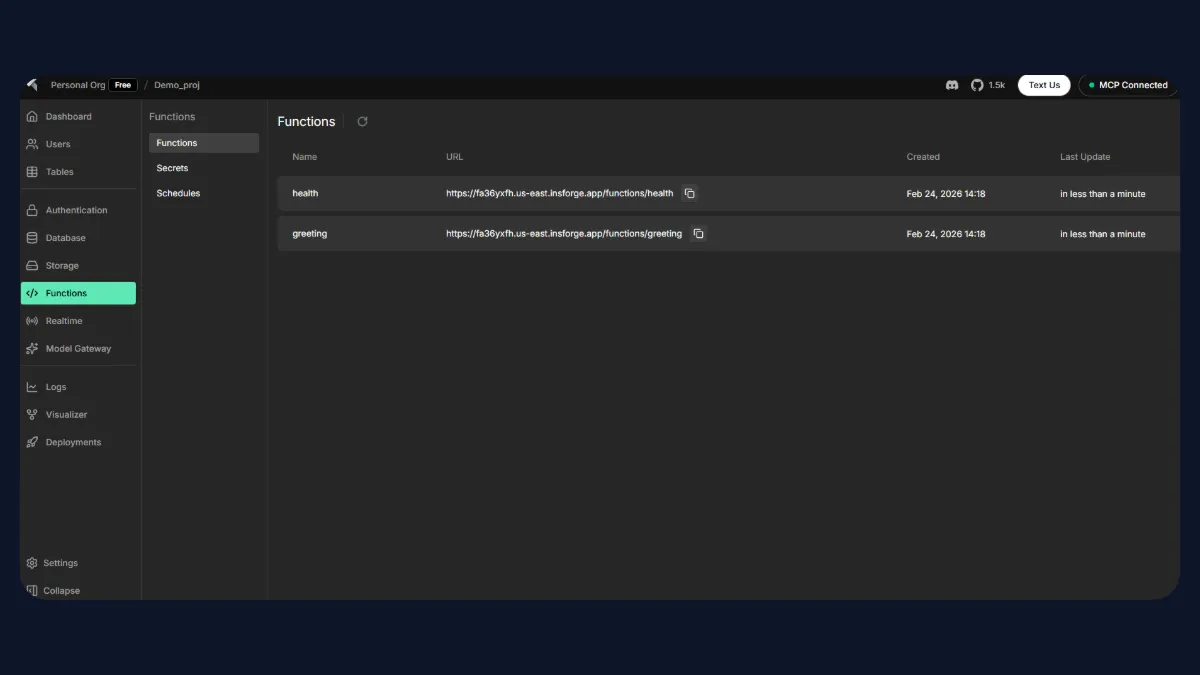

Task 2 - Generating and Deploying a GET /health Endpoint

The second task demonstrates edge function generation using the official InsForge SDK pattern.

The coding assistant was prompted to generate a GET /health endpoint following InsForge's edge function conventions. The assistant created a function under the standard structure:

functions/health/index.js

The generated implementation followed the official SDK pattern by optionally initializing the InsForge client using environment variables. The handler returned a structured JSON response indicating service health status, with optional database connectivity checks when credentials were available.

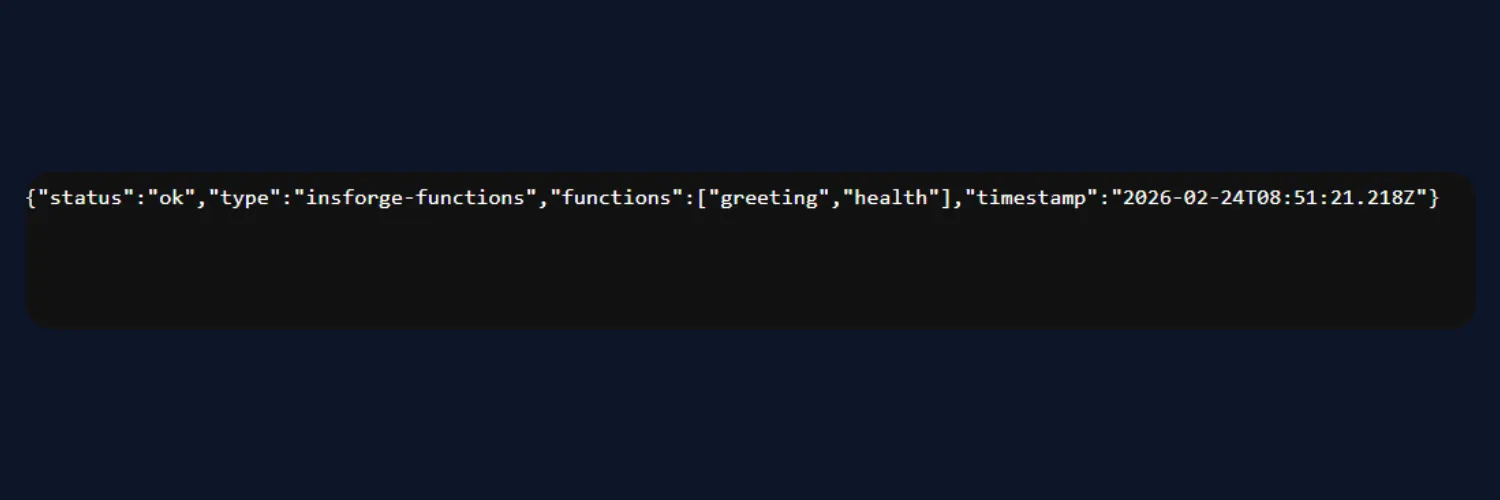

The resulting endpoint returned:

{

"status":"ok"

}

After deployment, the endpoint became accessible through the InsForge functions route. The InsForge dashboard reflected the deployed function under the Edge Functions section, including associated metadata and execution logs.

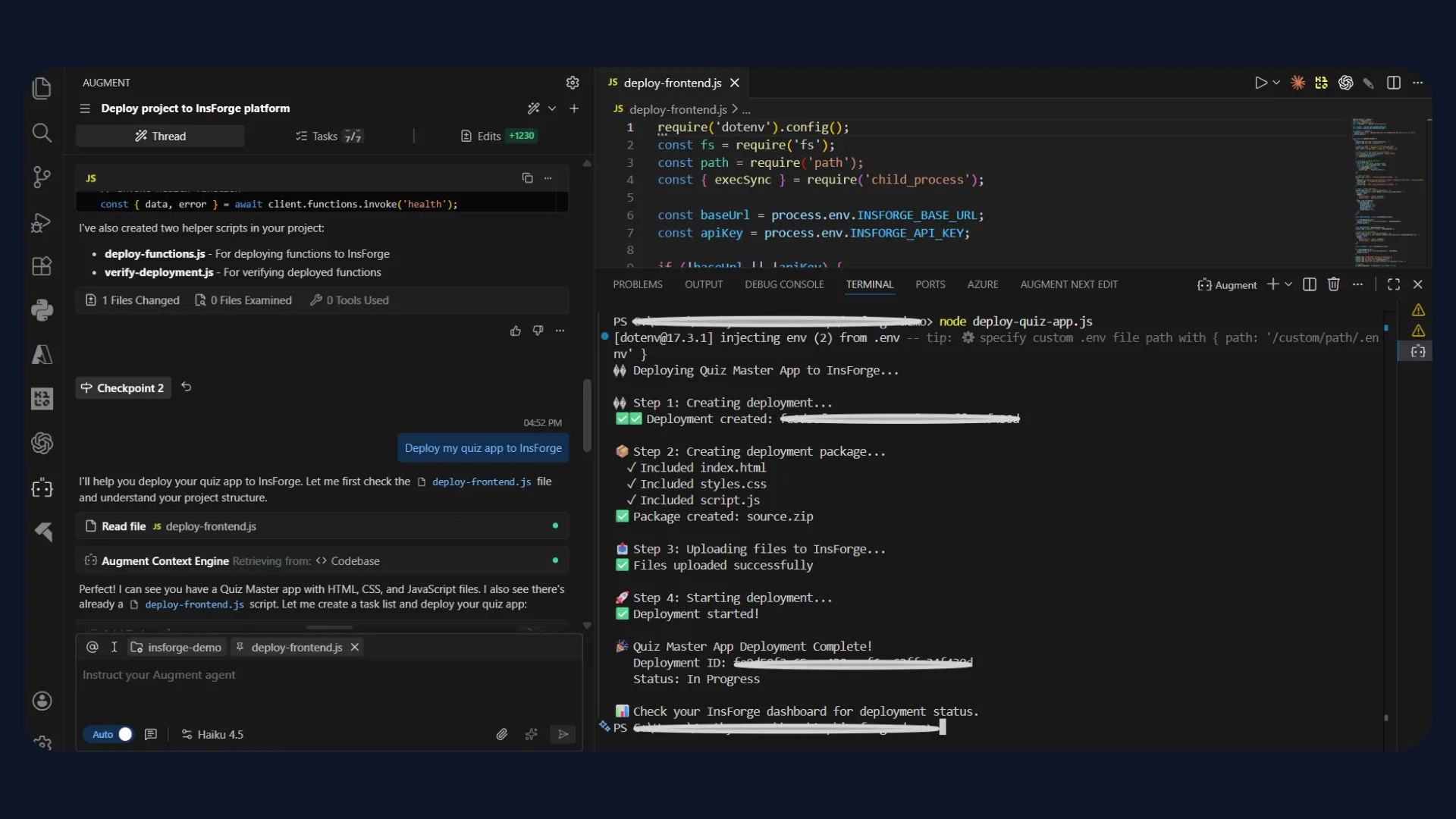

Task 3: Deploying Your App to InsForge With a Single Command

Using a single prompt: "Create and Deploy my Quiz App on InsForge" - The agent generated the complete quiz application, configured the backend, and deployed it end-to-end on InsForge.

The deployment was successful on the first attempt, with zero errors or discrepancies. The live URL was returned directly by the agent, demonstrating InsForge's ability to execute full-stack app creation and deployment in one accurate, deterministic workflow.

Comparison: InsForge vs Supabase for Agent-Oriented Workflows

When building with AI coding agents, the backend must be exposed in a way that supports structured, deterministic execution. Platforms like Supabase are designed primarily for human-driven REST workflows and dashboard interaction.

InsForge differentiates itself by being MCP-native, exposing backend primitives as typed tools optimized for agent-oriented development. It also enables end-to-end backend setup and deployment through structured tool invocation, allowing entire backend workflows to be provisioned in a single prompt or command rather than fragmented API calls and manual configuration.

In MCPMark evaluations across 21 database tasks, InsForge MCP achieved 47.6% Pass⁴ accuracy compared to 28.6% for Supabase MCP, highlighting a significant gap in repeatable execution. Supabase MCP also consumed 11.6M tokens per run versus 8.2M for InsForge, indicating higher reasoning overhead during backend operations.

In security-focused workflows such as Row Level Security setup, InsForge completed 4/4 successful runs, while Supabase succeeded only 1/4 times due to limited visibility into existing policies and RLS state.

These results suggest that restricted schema and metadata exposure in Supabase MCP can lead to retries, blind migrations, and reduced reliability in multi-step agent-driven workflows.

| Dimension | Supabase | InsForge |

|---|---|---|

| Interaction Model | Primarily REST-centered APIs | MCP-native tool-based interaction |

| Tool Schema Exposure | Limited protocol-level schema exposure | Explicit typed tool schemas via MCP |

| Backend State Introspection | Requires manual querying or dashboard checks | Structured state inspection through tools |

| Infrastructure Mutation | SQL and REST driven | Deterministic typed tool calls |

| Dashboard Dependency | Significant for configuration | Reduced through programmatic control |

| Agent Optimization | Human-first backend platform | Designed for AI coding agents |

| Multi-step Project Setup | Requires sequential API orchestration | Can be executed through structured tool chaining |

Key Takeaways

As coding assistants take on schema design, authentication flows, storage configuration, and function deployment, backend systems must expose structured and deterministic interfaces.

MCP-native backend layers provide this foundation by enabling typed tool invocation, state introspection, and predictable mutations. InsForge implements this model by exposing backend primitives through a semantic layer optimized for AI coding agents, enabling controlled and inspectable infrastructure operations.

Are your current backend tools designed for agents?

If you are building production systems with AI-driven workflows, the backend architecture you choose determines how reliable those workflows will be. Explore InsForge on GitHub and check the official documentation to evaluate how an MCP-native backend can support your stack.

Try InsForge

Quickstart guide here